kottke.org posts about programming

Interesting observation by Mitchell Hashimoto (creator of Vagrant and Ghostty) on how a company’s or product’s choice of programming language matters less in the age of agentic programming:

On the interesting side is how fungible programming languages are nowadays. Programming languages used to be LOCK IN, and they’re increasingly not so. You think the Bun rewrite in Rust is good for Rust? Bun has shown they can be in probably any language they want in roughly a week or two. Rust is expendable. It’s useful until it’s not then it can be thrown out. That’s interesting!

Hashimoto is talking about this complete rewrite of Bun (a Javascript/Typescript toolkit that’s owned by Anthropic and includes “a fast JavaScript runtime designed as a drop-in replacement for Node.js”) in a completely different programming language (Rust) in just 6 days.

6,755 commits, branch name claude/phase-a-port, PR opened May 8th, merged May 14th.

Six days. A full rewrite of a production-grade JS runtime, merged in six days.

Let that number sit in your mind for a second.

Whether or not you think that taking this six-day-old code completely rewritten & tested mostly by LLMs and deploying it in production is a good idea, it’s something that many more companies are comfortable doing. Simon Willison riffing on Hashimoto’s thoughts:

I was talking to someone who worked for a medium sized technology company with a pair of legacy/legendary iPhone and Android apps.

They told me they had just completed a coding-agent driven rewrite of both apps to React Native.

I asked why they chose that, given that coding agents presumably drive down the cost of maintaining separate iPhone and Android apps.

They said that React Native has improved a lot over the past few years and covered everything their apps needed to do.

And… if it turned out to be the wrong decision, they could just port back to native in the future.

This also applies to other layers of the tech stack (database, etc.) to various extents as well as to some other types of software, e.g. it’s trivial to export your bookmarks from one bookmark manager to another if they both have APIs or import/export capabilities — or, with a bit more effort, you can write your own.

BTW, this also goes for the big AI companies — it’s pretty easy to switch between different flagship models or to the increasingly powerful local models.

I had a lot of fun playing around with this collection of generative design tools, especially the textual ones. I wore out the “randomize” button on each of these. (via sidebar)

Lauren Goode convinced her editors at Wired to let her spend a couple of days at a tech company called Notion learning how to vibe-code (i.e. AI-assisted computer programming): Why Did a $10 Billion Startup Let Me Vibe-Code for Them — and Why Did I Love It?

Expanding a mermaid diagram or alphabetizing a list of dog breeds hardly seemed like sticking it to the coding man. But during my time at Notion I did feel as though a trapdoor in my brain had opened. I had gotten a shimmery glimpse of what it’s like to be an anonymous logical god, pulling levers. I also felt capable of learning something new — and had the freedom to be bad at something new — in a semi-private space.

Both vibe coding and journalism are an exercise in prodding, and in procurement: Can you say more about this? Can you elaborate on that? Can you show me the documents? With our fellow humans, we can tolerate a bit of imprecision in our conversations. If my stint as a vibe coder underscored anything, it’s that the AIs coding for us demand that we articulate exactly what we want.

During lunch on one of my days at Notion, an engineer asked me if I ever use ChatGPT to write my articles for me. It’s a question I’ve heard more than once this summer. “Never,” I told her, and her eyes widened. I tried to explain why — that it’s a matter of principle and not a statement on whether an AI can cobble together passable writing. I decided not to get into how changes to search engines, and those little AI summaries dotting the information landscape, have tanked the web traffic going to news sites. Almost everyone I know is worried about their jobs.

One engineer at Notion compared the economic panic of this AI era to when the compiler was first introduced. The idea that one person will suddenly do the work of 100 programmers should be inverted, he said; instead, every programmer will be 100 times as productive. His manager agreed: “Yeah, as a manager I would say, like— everybody’s just doing more,” she said. Another engineer told me that solving huge problems still demands collaboration, interrogation, and planning. Vibe coding, he asserted, mostly comes in handy when people are rapidly prototyping new features.

These engineers seemed reasonably assured that humans will remain in the loop, even as they drew caricatures of the future coder (“100 times as productive”). I tend to believe this, too, and that people with incredibly specialized skills or subject-matter expertise will still be in demand in a lot of workplaces. I want it to be true, anyway.

A very interesting read. Over the past several months, I have been reading a lot about LLMs and coding, particularly pieces by experienced coders who have switched to using LLMs to code. There is a lot of silly (and perhaps dangerous) hype around AI, but over the past several months, LLMs and supporting tools have gotten unnaturally good at programming, when directed properly. Here are some of the things I’ve read recently in case you’re curious about what’s possible now:

I’m curious to know if any experienced (or inexperienced) coders among you have tried any of the recent suite of AI-assisted coding tools and what your experience has been. (Your general thoughts about AI — particularly its potential downsides, which have been amply documented elsewhere — are best left for some other time & place. Thx.)

Elon Musk has claimed that his “DOGE” team has found evidence of “massive fraud” at the Social Security Administration, alleging that 150-year-old Americans were receiving benefit checks. I saw this claim easily debunked over the weekend, but Wired has a good writeup of it. Basically, the programming language that these systems are written in (COBOL) often uses an arbitrary date as a baseline…most commonly a date from 150 years ago.

Computer programmers quickly claimed that the 150 figure was not evidence of fraud, but rather the result of a weird quirk of the Social Security Administration’s benefits system, which was largely written in COBOL, a 60-year-old programming language that undergirds SSA’s databases as well as systems from many other US government agencies.

COBOL is rarely used today, and as such, Musk’s cadre of young engineers may well be unfamiliar with it.

Because COBOL does not have a date type, some implementations rely instead on a system whereby all dates are coded to a reference point. The most commonly used is May 20, 1875, as this was the date of an international standards-setting conference held in Paris, known as the “Convention du Mètre.”

These systems default to the reference point when a birth date is missing or incomplete, meaning all of those entries in 2025 would show an age of 150.

The SSA also automatically stops benefit payments whenever someone reaches the age of 115.

Tim Carmody has a great appreciation of HTML in Wired magazine: HTML Is Actually a Programming Language. Fight Me.

HTML is somehow simultaneously paper and the printing press for the electronic age. It’s both how we write and what we read. It’s the most democratic computer language and the most global. It’s the medium we use to connect with each other and publish to the world. It makes perfect sense that it was developed to serve as a library — an archive, a directory, a set of connections — for all digital knowledge.

I love HTML!

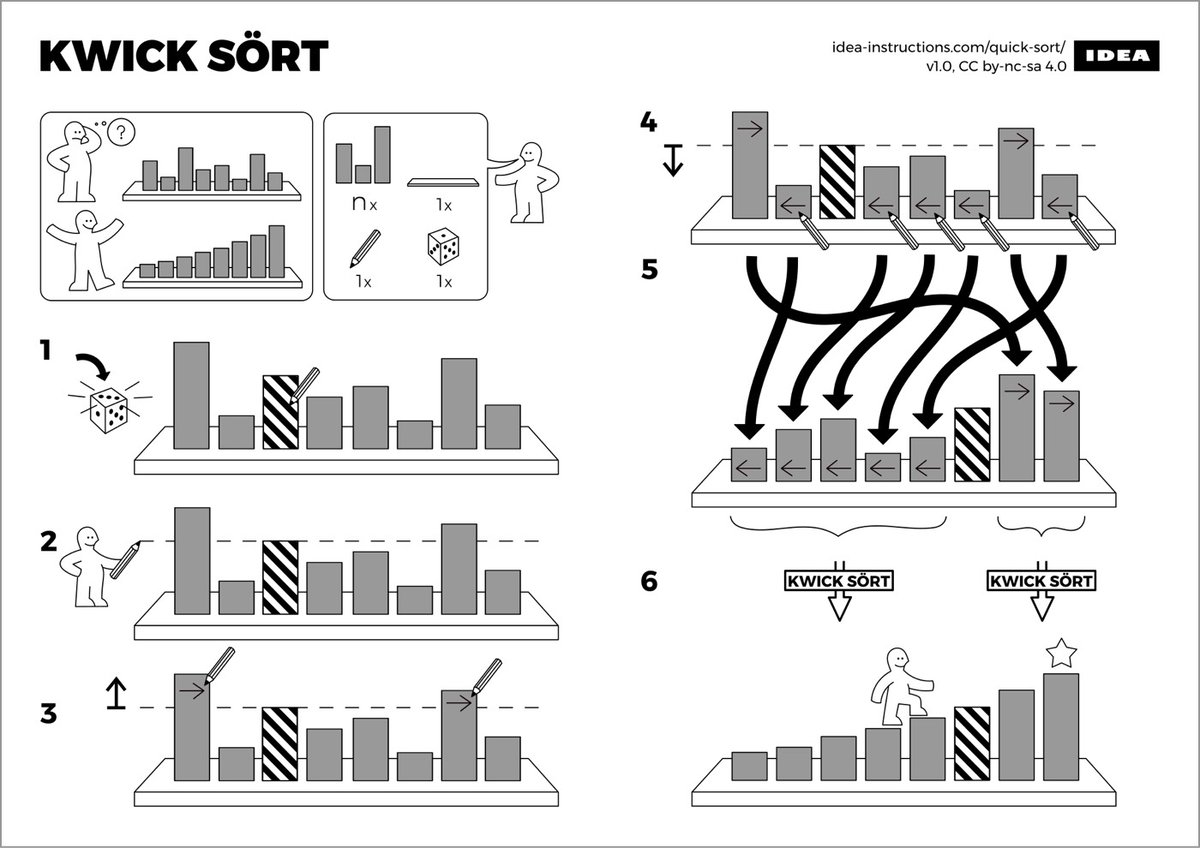

Let’s say you’ve got a bunch of books that need to be sorted alphabetically by author. What’s the fastest way to accomplish this task? Luckily, efficient sorting is a problem that’s been studied extensively in computer science and this TED-Ed video walks us through three possible sorts: bubble sort, insertion sort, and quicksort.

For more on sorting, check out Sorting Algorithms Visualized, sorting techniques visualized through Eastern European folk dancing, and a site where you can compare many different sorting algorithms with each other. (via the kid should see this)

When you write some code and put it on a spacecraft headed into the far reaches of space, you need to it work, no matter what. Mistakes can mean loss of mission or even loss of life. In 2006, Gerard Holzmann of the NASA/JPL Laboratory for Reliable Software wrote a paper called The Power of 10: Rules for Developing Safety-Critical Code. The rules focus on testability, readability, and predictability:

- Avoid complex flow constructs, such as goto and recursion.

- All loops must have fixed bounds. This prevents runaway code.

- Avoid heap memory allocation.

- Restrict functions to a single printed page.

- Use a minimum of two runtime assertions per function.

- Restrict the scope of data to the smallest possible.

- Check the return value of all non-void functions, or cast to void to indicate the return value is useless.

- Use the preprocessor sparingly.

- Limit pointer use to a single dereference, and do not use function pointers.

- Compile with all possible warnings active; all warnings should then be addressed before release of the software.

All this might seem a little inside baseball if you’re not a software developer (I caught only about 75% of it — the video embedded above helped a lot), but the goal of the Power of 10 rules is to ensure that developers are working in such a way that their code does the same thing every time, can be tested completely, and is therefore more reliable.

Even here on Earth, perhaps more of our software should work this way. In 2011, NASA applied these rules in their analysis of unintended acceleration of Toyota vehicles and found 243 violations of 9 out of the 10 rules. Are the self-driving features found in today’s cars written with these rules in mind or can recursive, untestable code run off into infinities while it’s piloting people down the freeway at 70mph?

And what about AI? Anil Dash recently argued that today’s AI is unreasonable:

Amongst engineers, coders, technical architects, and product designers, one of the most important traits that a system can have is that one can reason about that system in a consistent and predictable way. Even “garbage in, garbage out” is an articulation of this principle — a system should be predictable enough in its operation that we can then rely on it when building other systems upon it.

This core concept of a system being reason-able is pervasive in the intellectual architecture of true technologies. Postel’s Law (“Be liberal in what you accept, and conservative in what you send.”) depends on reasonable-ness. The famous IETF keywords list, which offers a specific technical definition for terms like “MUST”, “MUST NOT”, “SHOULD”, and “SHOULD NOT”, assumes that a system will behave in a reasonable and predictable way, and the entire internet runs on specifications that sit on top of that assumption.

The very act of debugging assumes that a system is meant to work in a particular way, with repeatable outputs, and that deviations from those expectations are the manifestation of that bug, which is why being able to reproduce a bug is the very first step to debugging.

Into that world, let’s introduce bullshit. Today’s highly-hyped generative AI systems (most famously OpenAI) are designed to generate bullshit by design.

I bet NASA will be very slow and careful in deciding to run AI systems on spacecraft — after all, they know how 2001: A Space Odyssey ends just as well as the rest of us do.

Ilia Blinderman of The Pudding has written a pair of essays about how to make data-driven visual essays. Part 1 covers working with data.

It’s worth noting here that this first stage of data-work can be somewhat vexing: computers are great, but they’re also incredibly frustrating when they don’t do what you’d like them to do. That’s why it’s important to remember that you don’t need to worry — learning to program is exactly as infuriating and as dispiriting for you as it is for everyone else. I know this all too well: some people seem to be terrific at it without putting in all that much effort; then there was me, who first began writing code in 2014, and couldn’t understand the difference between a return statement and a print statement. The reason learning to code is so maddening is because it doesn’t merely involve learning a set number of commands, but a way of thinking. Remember that, and know that the little victories you amass when you finally run your loop correctly or manage to solve a particular data problem all combine to form that deeper understanding.

Part 2 is on the design process.

Before you begin visualizing your data, think through the most important points that you’re trying to communicate. Is the key message the growth of a variable over time? A disparity between quantities? The degree to which a particular value varies? A geographic pattern?

Once you have an idea of the essential takeaways you’d like your readers to understand, you can consider which type of visualization would be most effective at expressing it. During this step, I like to think of the pieces of work that I’ve got in my archive and see if any one of those is especially suitable for the task at hand.

Check out The Pudding for how they’ve applied these lessons to creating visual essays about skin tone on the cover of Vogue or how many top high school players make it to the NBA.

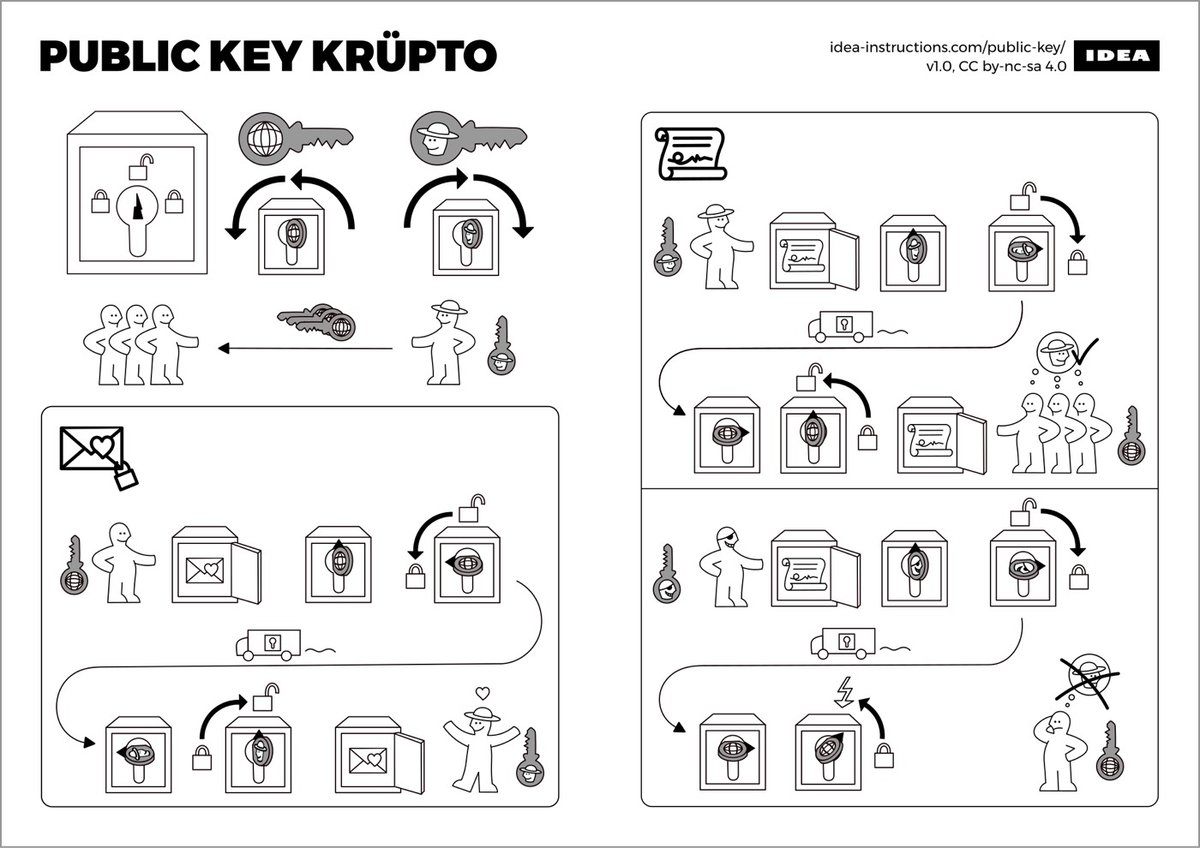

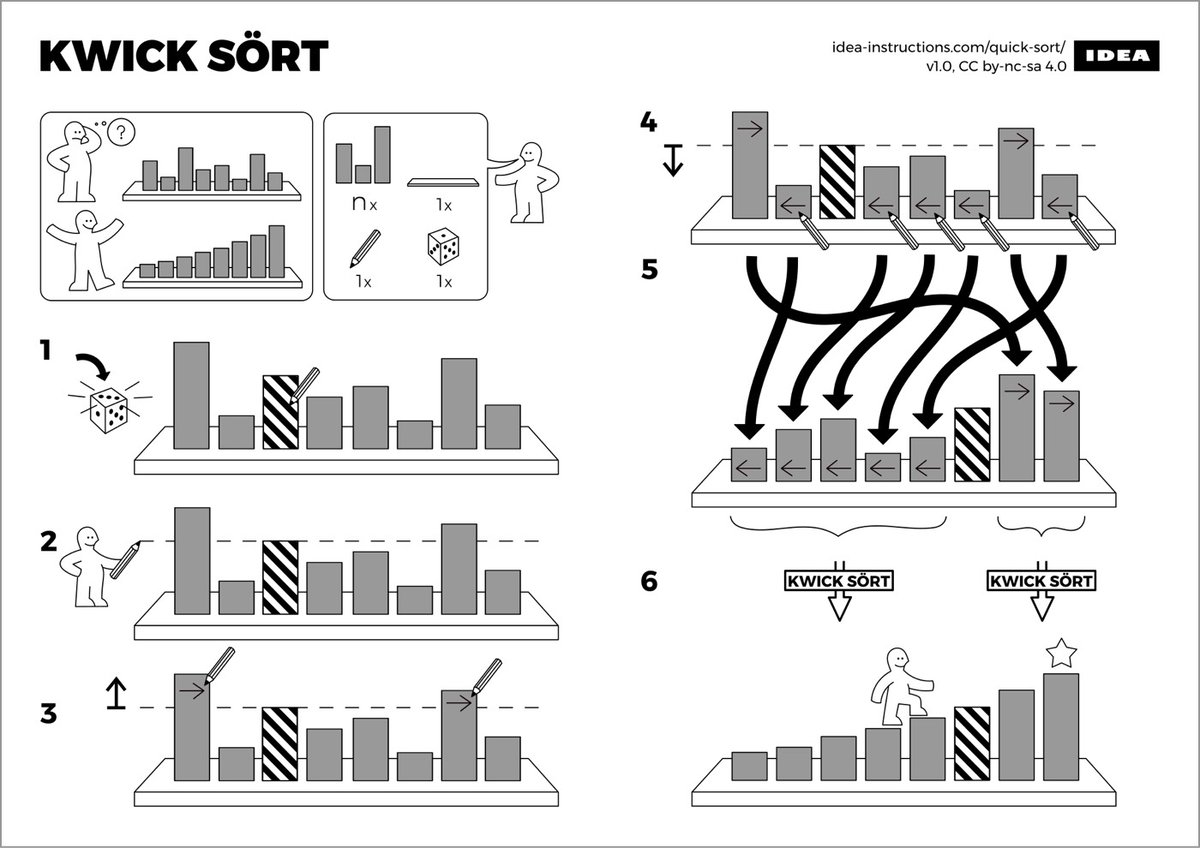

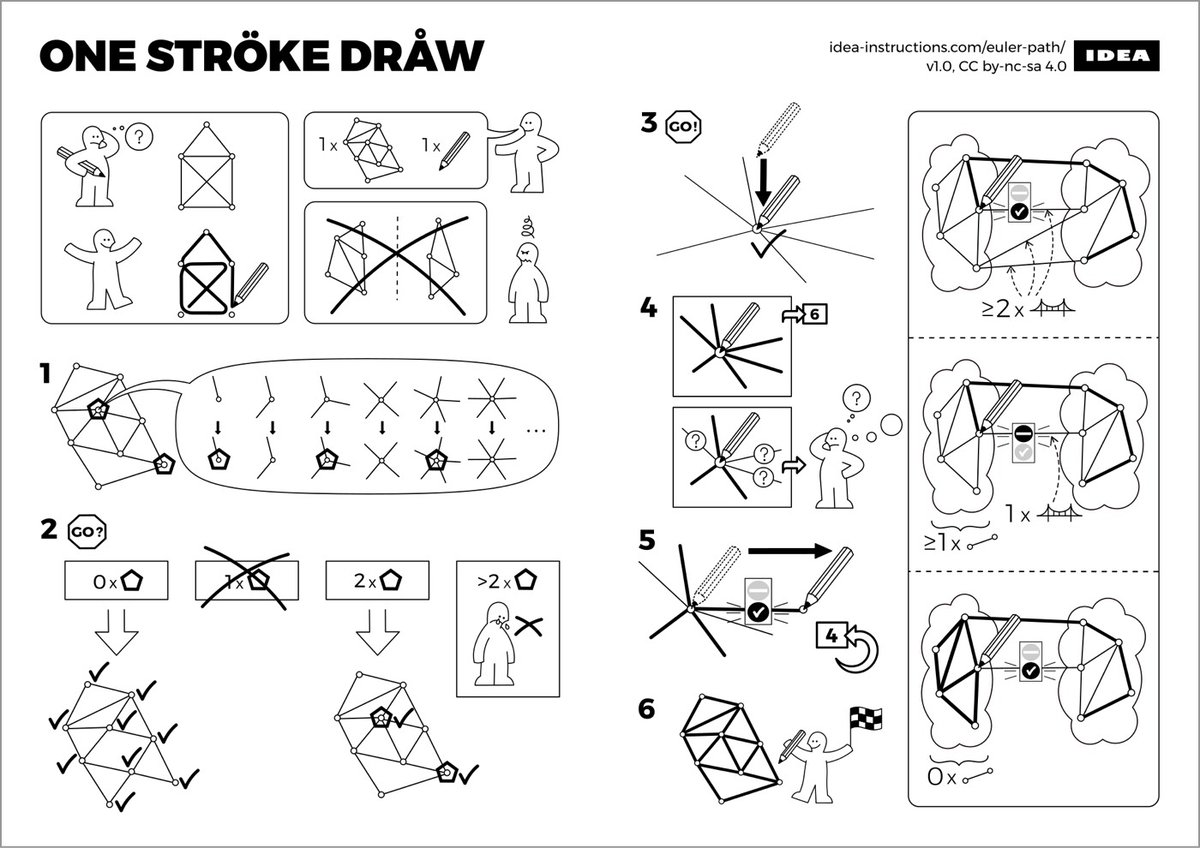

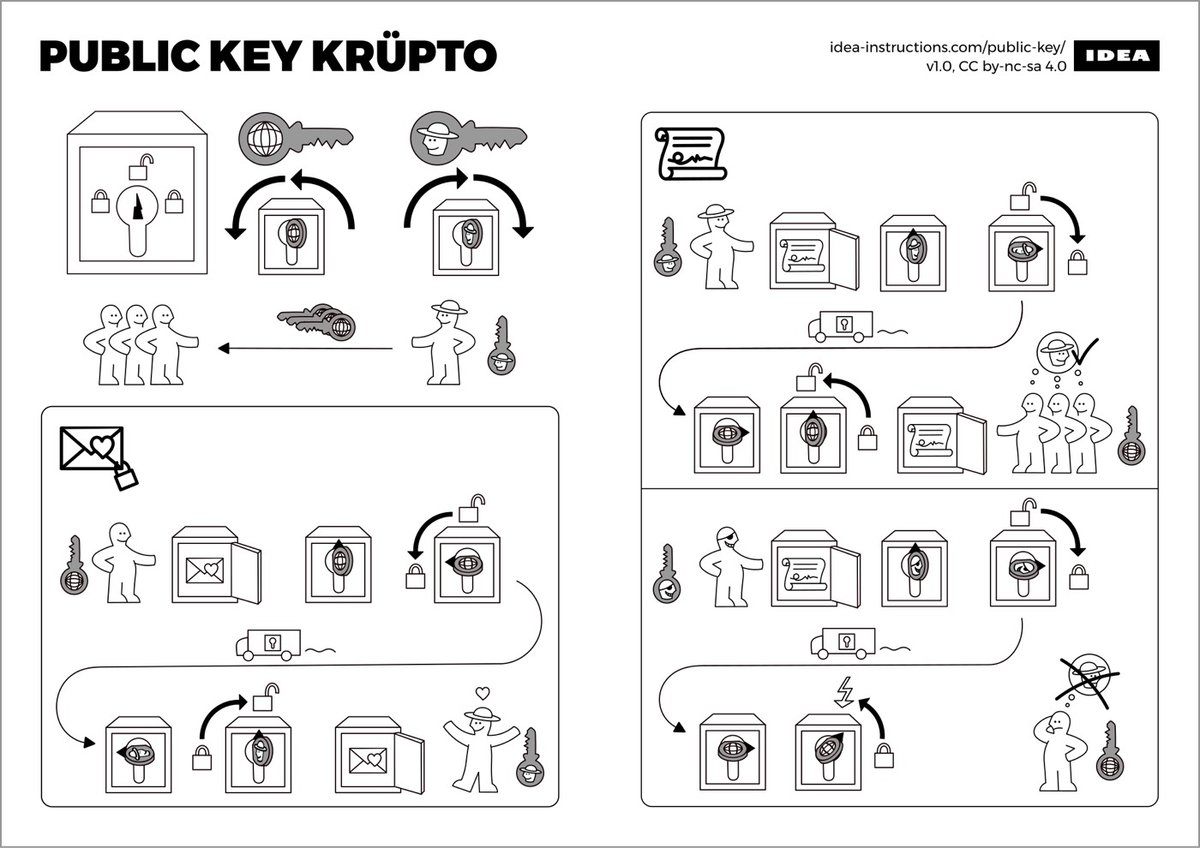

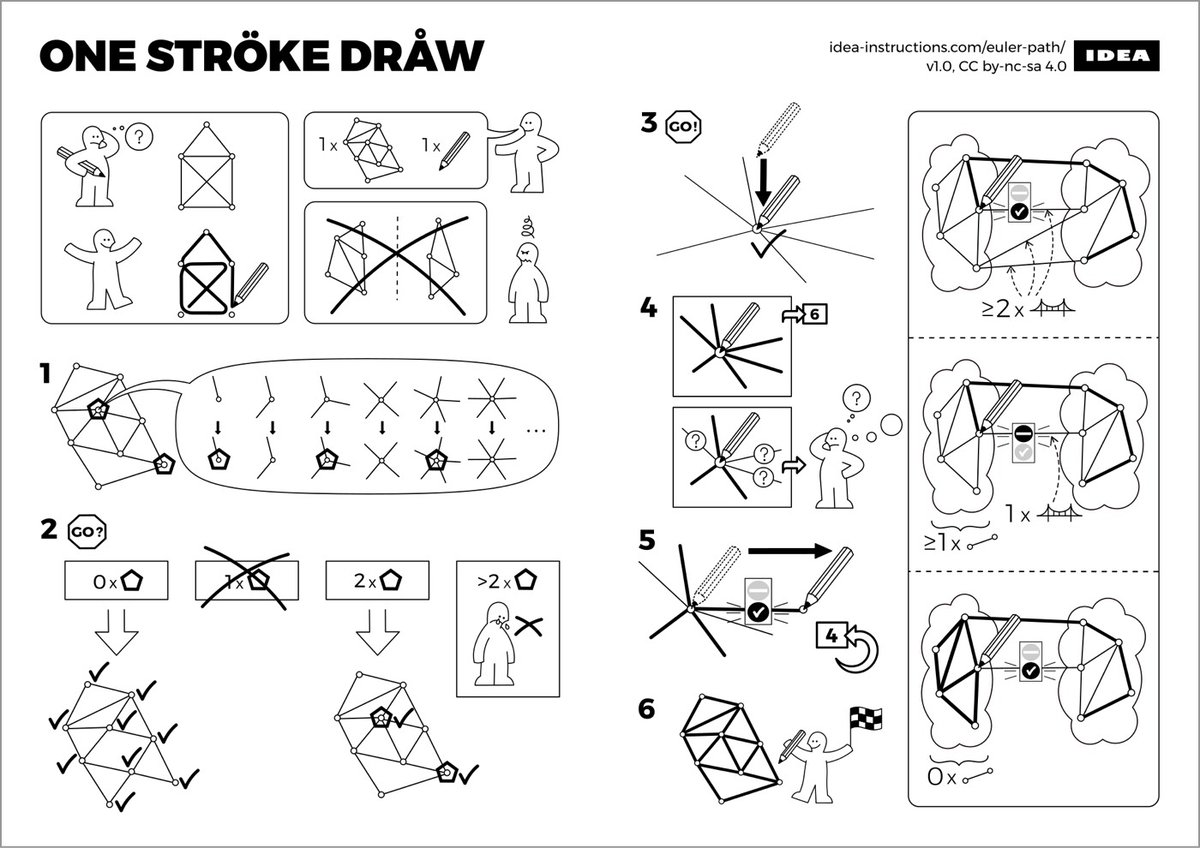

Sándor P. Fekete, Sebastian Morr, and Sebastian Stiller came up with these Ikea-style instructions for algorithms and data structures used in computer science. In addition to the three pictured above, there are also instructions for several other searches, trees, sorts, and scans.

From Feminist Frequency, a quick video biography of Ada Lovelace, which talks about the importance of her contribution to computing.

A mathematical genius and pioneer of computer science, Ada Lovelace was not only the created the very first computer program in the mid-1800s but also foresaw the digital future more than a hundred years to come.

This is part of Feminist Frequency’s Ordinary Women series, which also covered women like Ida B. Wells and Emma Goldman.

From the BBC, an hour-long documentary on Ada Lovelace, the world’s first computer programmer.

You might have assumed that the computer age began with some geeks out in California, or perhaps with the codebreakers of World War II. But the pioneer who first saw the true power of the computer lived way back, during the transformative age of the Industrial Revolution.

Happy Ada Lovelace Day, everyone!

Seymour Papert, a giant in the worlds of computing and education, died on Sunday aged 88.

Dr. Papert, who was born in South Africa, was one of the leading educational theorists of the last half-century and a co-director of the renowned Artificial Intelligence Laboratory at the Massachusetts Institute of Technology. In some circles he was considered the world’s foremost expert on how technology can provide new ways for children to learn.

In the pencil-and-paper world of the 1960s classroom, Dr. Papert envisioned a computing device on every desk and an internetlike environment in which vast amounts of printed material would be available to children. He put his ideas into practice, creating in the late ’60s a computer programming language, called Logo, to teach children how to use computers.

I missed out on using Logo as a kid, but I know many people for whom Logo was their introduction to computers and programming. The MIT Media Lab has a short remembrance of Papert as well.

When Grade-A nerds get together and talk about programming and math, a popular topic is P vs NP complexity. There’s a lot to P vs NP, but boiled down to its essence, according to the video:

Does being able to quickly recognize correct answers [to problems] mean there’s also a quick way to find [correct answers]?

Most people suspect that the answer to that question is “no”, but it remains famously unproven.

In fact, one of the outstanding problems in computer science is determining whether questions exist whose answer can be quickly checked, but which require an impossibly long time to solve by any direct procedure. Problems like the one listed above certainly seem to be of this kind, but so far no one has managed to prove that any of them really are so hard as they appear, i.e., that there really is no feasible way to generate an answer with the help of a computer.

Given that there’s so much mathematicians don’t know about prime numbers, you might be surprised to learn that there’s a very simple regular expression for detecting prime numbers:

/^1?$|^(11+?)\\1+$/

If you’ve got access to Perl on the command line, try it out with some of these (just replace [number] with any integer):

perl -wle 'print "Prime" if (1 x shift) !~ /^1?$|^(11+?)\\1+$/' [number]

An explanation is here which I admit I did not quite follow. A commenter at Hacker News adds a bit more context:

However while cute, it is very slow. It tries every possible factorization as a pattern match. When it succeeds, on a string of length n that means that n times it tries to match a string of length n against a specific pattern. This is O(n^2). Try it on primes like 35509, 195341, 526049 and 1030793 and you can observe the slowdown.

Lauren Ipsum is a book about computer science for kids (age 10 and up) published by No Starch Press.

Meet Lauren, an adventurer who knows all about solving problems. But she’s lost in the fantastical world of Userland, where mail is delivered by daemons and packs of wild jargon roam.

Lauren sets out for home, traveling through a journey of puzzles, from the Push and Pop Cafe to the Garden of the Forking Paths. As she discovers the secrets of Userland, Lauren learns about computer science without even realizing it-and so do you!

Sounds intriguing. And 1000 bonus points for making the protagonist a girl. There’s an older self-published version of the book that’s been out for a couple of years. I like the older description slightly better:

Laurie is lost in Userland. She knows where she is, or where she’s going, but maybe not at the same time. The only way out is through Jargon-infested swamps, gates guarded by perfect logic, and the perils of breakfast time at the Philosopher’s Diner. With just her wits and the help of a lizard who thinks he’s a dinosaur, Laurie has to find her own way home.

Lauren Ipsum is a children’s story about computer science. In 20 chapters she encounters dozens of ideas from timing attacks to algorithm design, the subtle power of names, and how to get a fair flip out of even the most unfair coin.

Has anyone read it?

You can now program by tweeting snippets of Wolfram Language code to their Tweet-a-Program bot, @WolframTaP. To test it out, I tweeted:

And got back:

Cool!

David Auerbach writes about the high you get from coding.

These days I write more than I code, but one of the things I miss about programming is the coder’s high: those times when, for hours on end, I would lock my vision straight at the computer screen, trance out, and become a human-machine hybrid zipping through the virtual architecture that my co-workers and I were building. Hunger, thirst, sleepiness, and even pain all faded away while I was staring at the screen, thinking and typing, until I’d reach the point of exhaustion and it would come crashing down on me.

It was good for me, too. Coding had a smoothing, calming effect on my psyche, what I imagine meditation does to you if you master it.

Auerbach asserts that there’s something different about the flow state one enters while programming, compared to those brought on by making art, writing, etc. Over the years, I’ve written, designed, and programmed for a living, and programming is, by far, the thing that gets me the best high. I’ve definitely had productive multi-hour Photoshop and writing benders, but coding blocks out the world and the rest of myself like nothing else. In attempting to articulate to friends why I enjoy programming more than design or writing, I’ve been explaining it like this: for me, the coding process is all or nothing and has a definitive end.

When code doesn’t work within the specifications, it’s 100% broken. It won’t compile, the web server throws an error, or gives the wrong output. Writing and design almost always sorta work…even a first draft or an initial design communicates something to the reader/viewer. When the code works within the specifications, it’s done. The writing or design process is never done; even a great piece of writing or the best design can be improved incrementally or even scrapped altogether to go in a different and potentially more fruitful direction. Maybe, for me, programming’s definite ending is what makes it so enjoyable. The flow state comes from knowing that, while the journey is difficult and maddening and messily creative (just as with writing or design), there’s a definite point at which it’s done and you can move on to the next challenge. (via 5 intriguing things)

It’s possible to make a .zip file that contains itself infinitely many times. So a 440 byte file could conceivably be expanded into eleventy dickety two zootayunafliptobytes of data and beyond. Here’s the full explanation.

Programming sorting techniques visualized through Eastern European folk dancing. For instance, here’s the bubble sort with Hungarian dancing:

See also sorting algorithms visualized. (via @viljavarasto)

Stephen Wolfram (of Mathematica and A New Kind of Science fame) says the upcoming Wolfram Language is his company’s “most important technology project yet”.

We call it the Wolfram Language because it is a language. But it’s a new and different kind of language. It’s a general-purpose knowledge-based language. That covers all forms of computing, in a new way.

There are plenty of existing general-purpose computer languages. But their vision is very different — and in a sense much more modest — than the Wolfram Language. They concentrate on managing the structure of programs, keeping the language itself small in scope, and relying on a web of external libraries for additional functionality. In the Wolfram Language my concept from the very beginning has been to create a single tightly integrated system in which as much as possible is included right in the language itself.

The demo video is a little mind-melting in parts:

Not sure if this will take off or not, but the idea behind it all is worth exploring.

This video visualization of 15 different sorting algorithms is mesmerizing. (Don’t forget the sound.)

An explanation of the process. You can play with several different kinds of sorts here.

Black Perl is a poem written in valid Perl 3 code:

BEFOREHAND: close door, each window & exit; wait until time.

open spellbook, study, read (scan, select, tell us);

write it, print the hex while each watches,

reverse its length, write again;

kill spiders, pop them, chop, split, kill them.

unlink arms, shift, wait & listen (listening, wait),

sort the flock (then, warn the "goats" & kill the "sheep");

kill them, dump qualms, shift moralities,

values aside, each one;

die sheep! die to reverse the system

you accept (reject, respect);

next step,

kill the next sacrifice, each sacrifice,

wait, redo ritual until "all the spirits are pleased";

do it ("as they say").

do it(*everyone***must***participate***in***forbidden**s*e*x*).

return last victim; package body;

exit crypt (time, times & "half a time") & close it,

select (quickly) & warn your next victim;

AFTERWORDS: tell nobody.

wait, wait until time;

wait until next year, next decade;

sleep, sleep, die yourself,

die at last

# Larry Wall

It’s not Shakespeare, but it’s not bad for executable code.

This is a surprisingly helpful activity for learning about regular expressions. (via @bdeskin)

From Stack Overflow, a question about how to efficient sort a pile of socks.

Yesterday I was pairing the socks from the clean laundry, and figured out the way I was doing it is not very efficient. I was doing a naive search — picking one sock and “iterating” the pile in order to find its pair. This requires iterating over n/2 * n/4 = n^2/8 socks on average.

As a computer scientist I was thinking what I could do? sorting (according to size/color/…) of course came into mind to achieve O(NlogN) solution.

And everyone gets it wrong. The correct answer is actually:

1) Throw all your socks out.

2) Go to Uniqlo and buy 15 identical pairs of black socks.

3) When you want to wear socks, pick any two out of the drawer.

4) When you notice your socks are wearing out, goto step 1.

QED

An extensive collection gathered from all over the internet of the source code and documentation for NASA’s Apollo and Gemini programs. Here’s part of the source code for Apollo 11’s guidance computer.

And here’s an interesting tidbit about the core rope memory used for the Apollo’s guidance computer:

Fun fact: the actual programs in the spacecraft were stored in core rope memory, an ancient memory technology made by (literally) weaving a fabric/rope, where the bits were physical rings of ferrite material.

“Core” memory is resistant to cosmic rays. The state of a core bit will not change when bombarded by radiation in Outer Space. Can’t say the same of solid state memory.

Woven memory! Also called LOL memory:

Software written by MIT programmers was woven into core rope memory by female workers in factories. Some programmers nicknamed the finished product LOL memory, for Little Old Lady memory.

For her yearly month-long project at Slate, Annie Lowrey wanted to learn how to code. She picked Ruby and became interested in the story of _why, the mysterious Ruby hacker who disappeared suddenly in 2009. In a long article at Slate, Lowrey shares her experience learning to program and, oh, by the way, tracks down _why. Sort of.

The pickaxe book first shows you how to install Ruby on your computer. (That leads to a strange ontological question: Is a programming language a program? Basically, yes. You can download it from the Internet so that your computer will know how to speak it.)

Then the pickaxe book moves on to stuff like this: “Ruby is a genuine object-oriented language. Everything you manipulate is an object, and the results of those manipulations are themselves objects. However, many languages make the same claim, and their users often have a different interpretation of what object-oriented means and a different terminology for the concepts they employ.”

Programming manual, or Derrida? As I pressed on, it got little better. Nearly every page required aggressive Googling, followed by dull confusion. The vocabulary alone proved huge and complex. Strings! Arrays! Objects! Variables! Interactive shells! I kind of got it, I would promise myself. But the next morning, I had retained nothing. Ruby remained little more than Greek to me.

Very much trying not to read the entirety of this beginner’s guide to developing iOS apps published by Apple because then I’ll be tempted to actually make one.

Codify is an iPad app that allows you to code iPad games on your iPad.

We think Codify is the most beautiful code editor you’ll use, and it’s easy. Codify is designed to let you touch your code. Want to change a number? Just tap and drag it. How about a color, or an image? Tapping will bring up visual editors that let you choose exactly what you want.

Codify is built on the Lua programming language. A simple, elegant language that doesn’t rely too much on symbols — a perfect match for iPad.

(via df)

Sort of a nerdy version of Texts From Last Night, Commit Logs From Last Night chronicles the often frustrating process of committing working code.

SUPER ugly, but it works

Added a bunch of semi colons that were missing for some balls weird reason.

Oops, left some debugging crap

Dennis Richie passed away last week. Richie created the C programming language, was a key contributer to UNIX, and wrote an early definitive work on programming, The C Programming Language.

We lost a tech giant today. Dennis MacAlistair Ritchie, co-creator of Unix and the C programming language with Ken Thompson, has passed away at the age of 70. Ritchie has made a tremendous amount of contribution to the computer industry, directly and indirectly affecting (improving) the lives of most people in the world, whether you know it or not.

These sorts of comparisons are inexact at best, but Richie’s contribution to the technology industry rivals that of Steve Jobs’…Richie’s was just less noticed by non-programmers.

Older posts

Socials & More